Research and Projects

Fairness in Recommendation Systems

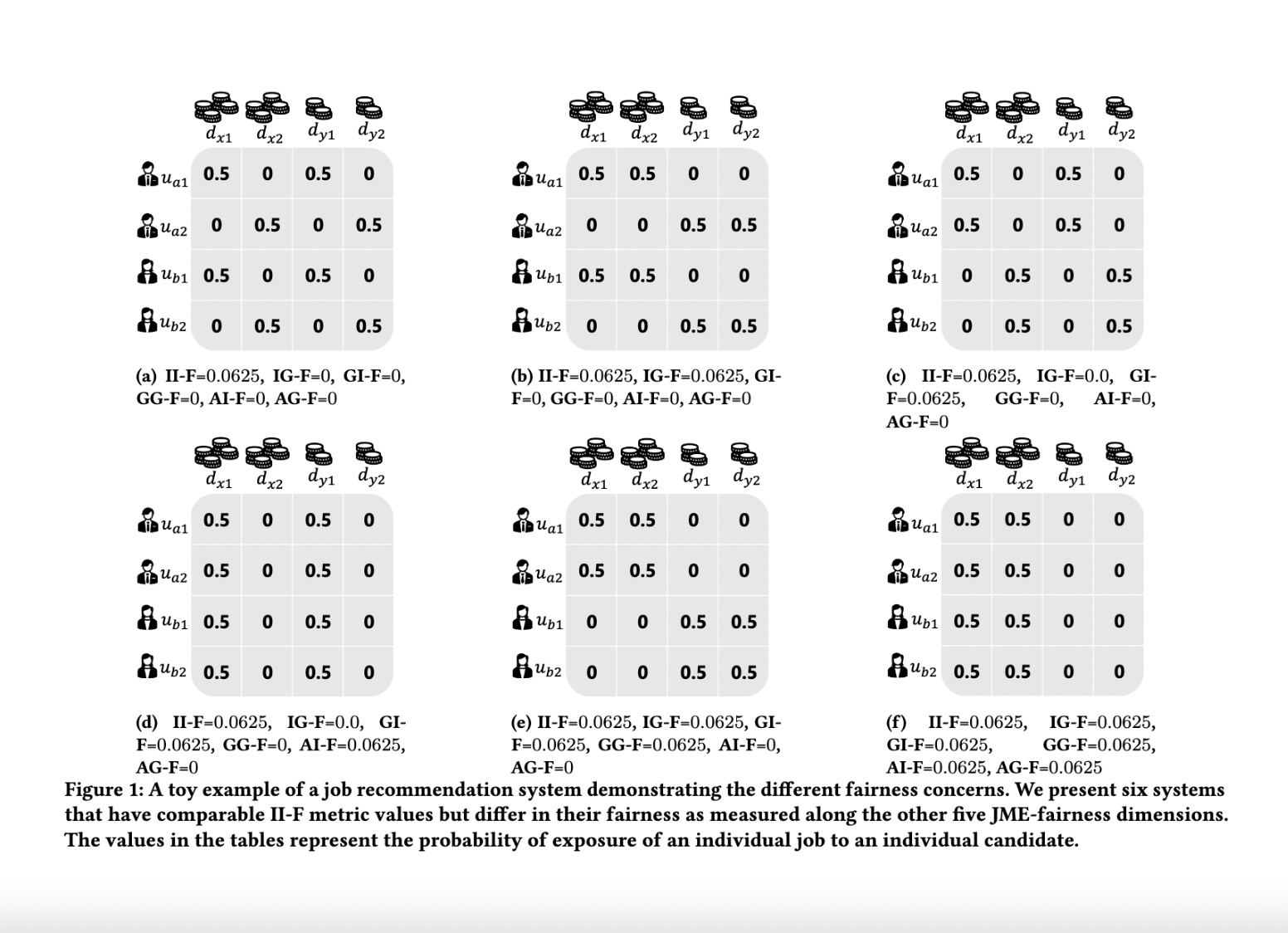

Prior research on exposure fairness in the context of recommender systems has focused mostly on disparities in the exposure of individual or groups of items to individual users of the system. The problem of how individual or groups of items may be systemically under or over exposed to groups of users, or even all users, has received relatively less attention. However, such systemic disparities in information exposure can result in observable social harms, such as withholding economic opportunities from historically marginalized groups allocative harm or amplifying gendered and racialized stereotypes representational harm. Previously, Diaz et al. developed the expected exposure metric—that incorporates existing user browsing models that have previously been developed for information retrieval—to study fairness of content exposure to individual users. We extend their proposed framework to formalize a family of exposure fairness metrics that model the problem jointly from the perspective of both the consumers and producers. Specifically, we consider group attributes for both types of stakeholders to identify and mitigate fairness concerns that go beyond individual users and items towards more systemic biases in recommendation. Furthermore, we study and discuss the relationships between the different exposure fairness dimensions proposed in this paper, as well as demonstrate how stochastic ranking policies can be optimized towards said fairness goals.

Selected publications:

Selected publications:

Research Interests

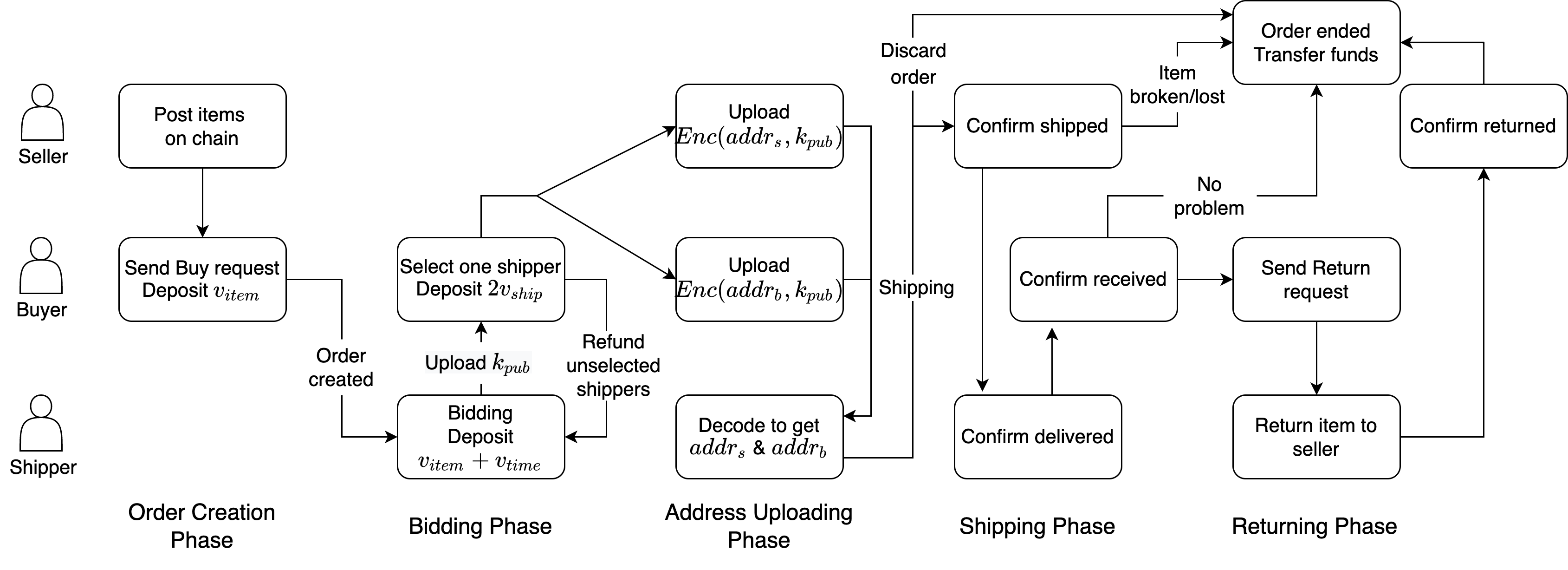

As Web3 has been thriving with the progress of blockchain technologies, in this group, we aim to carry out cutting-edge research projects on advanced cryptography (e.g., Zero-knowledge Proof), metaverse (e.g., NFT, DAO, GameFi), blockchain (e.g., DApps, DeFi, Consensus Protocol), and cloud infrastructures (e.g., RPC cache, cloud scheduling). While the research topics are broad, we mainly focus on two main research directions. One is to build Web3 infrastructrues that already exist in the Web2 world and bridge the gap between Web3 and Web2, such as decentralized E-Commerce. The other direction is to apply advanced cryptographic tools and AI models to analyze and improve existing Web3 solutions, such as using data-driven method to analyze fraud/scam in blockchain transactions and intervent through smart contract to provide user protection with cryptographic security.

Selected publications:

Selected publications:

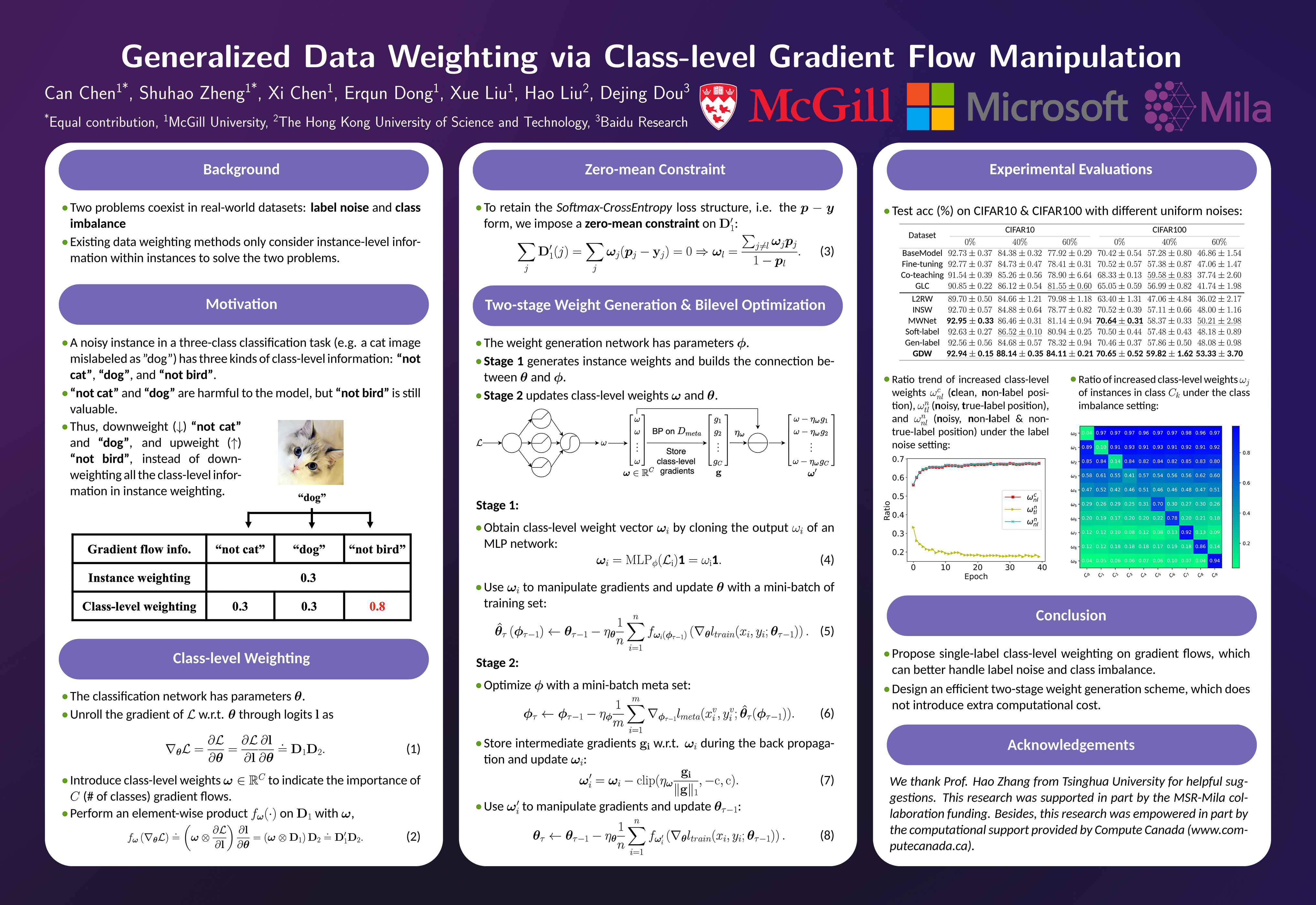

Label noise and class imbalance are two major issues coexisting in real-world

datasets. To alleviate the two issues, state-of-the-art methods reweight each

instance by leveraging a small amount of clean and unbiased data. Yet, these

methods overlook class-level information within each instance, which can be

further utilized to improve performance. To this end, in this paper, we propose

Generalized Data Weighting (GDW) to simultaneously mitigate label noise and

class imbalance by manipulating gradients at the class level. To be specific, GDW

unrolls the loss gradient to class-level gradients by the chain rule and reweights

the flow of each gradient separately. In this way, GDW achieves remarkable

performance improvement on both issues. Aside from the performance gain,

GDW efficiently obtains class-level weights without introducing any extra computational cost compared with instance weighting methods. Specifically, GDW

performs a gradient descent step on class-level weights, which only relies on

intermediate gradients. Extensive experiments in various settings verify the effectiveness of GDW. For example, GDW outperforms state-of-the-art methods by

2.56% under the 60% uniform noise setting in CIFAR10. Our code is available at

https://github.com/GGchen1997/GDW-NIPS2021.

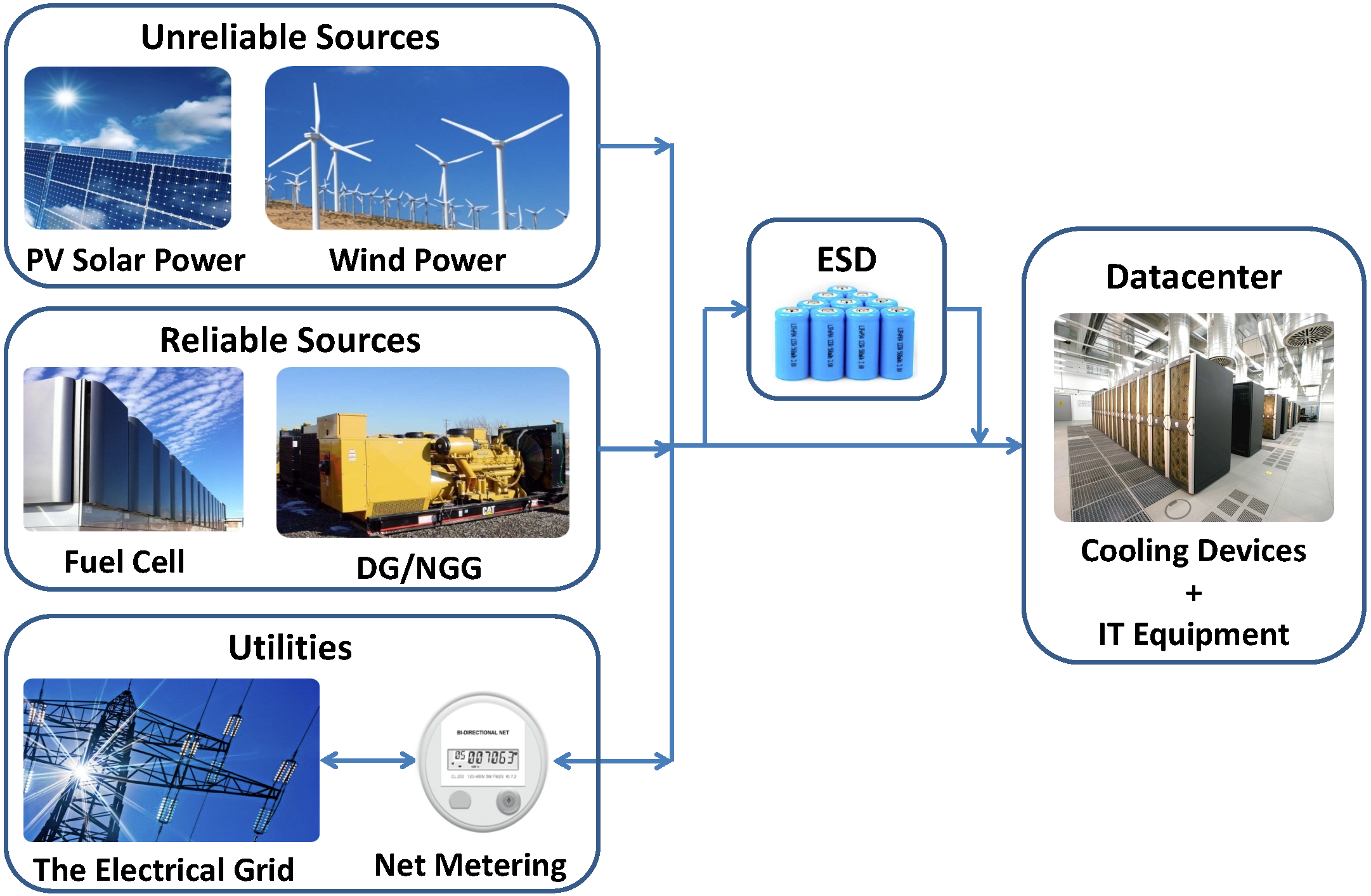

Sustainable Datacenters

The computing capacity and scale of data centers are increasing to meet the soaring demand for IT applications and services. Mega data center can host thousands of servers and require up to tens of megawatts of electricity. The high power consumption causes two serious consequences. First, data center operators may face millions of dollars annually charged by the electrical grid. Second, the enormous energy consumption can lead to negative environmental impacts. This project focuses on data center sustainability, to cut operational cost and increase renewable integration for data centers.

Selected publications:

Selected publications:

- F. Kong and X. Liu, "GreenPlanning: Optimal Energy Source Selection and Capacity Planning for Green Datacenters", in the ACM/IEEE 7th International Conference on Cyber-Physical Systems (ICCPS), 2016, pp. 1-10. (Acceptance rate: 27.8%)

- F. Kong and X. Liu, "A Survey on Green-Energy-Aware Power Management for Datacenters", in ACM Computing Surveys (CSUR), 2015, pp. 30:1-30:38. (IF: 3.37).

- A. Rahman, X. Liu and F. Kong, "A Survey on Geographic Load Balancing based Data Center Power Management in the Smart Grid Environment", in IEEE Communications Surveys and Tutorials (COMST), 2014, pp. 214-233. (IF: 6.81).

- X. Liu and F. Kong, "Datacenter Power Management in Smart Grids", in Foundations and Trends in Electronic Design Automation, now publishers Inc., 2015, pp. 1-98. (Monograph)

- F. Kong, X. Lu, M. Xia, X. Liu and H. Guan, "Distributed Optimal Datacenter Bandwidth Allocation for Dynamic Adaptive Video Streaming", in the 23rd ACM Multimedia (MM), 2015, pp. 531-540. (Full paper, acceptance rate: 20.6%)

- F. Kong, X. Liu and L. Rao, "Optimal Energy Source Selection and Capacity Planning for Green Datacenters", in the 40th ACM International Conference on Measurement and Modeling of Computer Systems (SIGMETRICS), 2014, pp. 575-576. (Extended abstract)

- Z. Sun, F. Kong, X. Liu, X. Zhou and X. Chen, "Intelligent Joint Spatio-temporal Management of Electric Vehicle Charging and Data Center Power Consumption", in the 5th International Green Computing Conference (IGCC), 2014, pp. 1-8.

- C. Dong, F. Kong, X. Liu and H. Zeng, "Green Power Analysis for Geographical Load Balancing Based Datacenters", in the 4th International Green Computing Conference (IGCC), 2013, pp. 1-8.

EV: Green Charging

The power flow incurred by electric vehicle(EV) and renewable energy are both crucial to the future smart grid. Yet how to integrated them into power systems remains largely unexplored. In the Cyber-Physical Intelligence Laboratory at McGill University, we are working on developing a series of innovative technology and market strategies to achieve this integration in an efficient, reliable and real-time manner.

Selected publications:

Selected publications:

- Q. Wang, X. Liu, J. Du, and F. Kong, "Smart Charging for Electric Vehicles: A Survey From the Algorithmic Perspective," IEEE Communications Surveys Tutorials, vol. 18, no. 2, pp. 1500–1517, Secondquarter 2016.

- F. Kong, Q. Xiang, L. Kong, and X. Liu, "On-Line Event-Driven Scheduling for Electric Vehicle Charging via Park-and-Charge", accepted by the 36th IEEE Real-Time Systems Symposium (RTSS), 2016. (Acceptance rate: 23.4%)

- F. Kong, X. Liu, Z. Sun, and Q. Wang, "Smart Rate Control and Demand Balancing for Electric Vehicle Charging", in the ACM/IEEE 7th International Conference on Cyber-Physical Systems (ICCPS), 2016, pp. 1-10. (Acceptance rate: 27.8%)

- F. Kong and X. Liu, "Distributed Deadline and Renewable Aware Electric Vehicle Demand Response in the Smart Grid", in the 36th IEEE Real-Time Systems Symposium (RTSS), 2015, pp. 23-32. (Acceptance rate: 22.5%)

- F. Kong, C. Dong, X. Liu and H. Zeng, "Quantity vs Quality: Optimal Harvesting Wind Power for the Smart Grid", in Proceedings of the IEEE (PIEEE), 2014, pp. 1762-1776. (IF: 4.93).

- F. Kong, C. Dong, X. Liu and H. Zeng, "Blowing Hard Is Not All We Want: Quantity vs Quality of Wind Power in the Smart Grid", in 33rd Annual IEEE International Conference on Computer Communications (INFOCOM), 2014, pp. 2813-2821. (Acceptance rate: 19.5%)

- Z. Sun, F. Kong, X. Liu, X. Zhou and X. Chen, "Intelligent Joint Spatio-temporal Management of Electric Vehicle Charging and Data Center Power Consumption", in the 5th International Green Computing Conference (IGCC), 2014, pp. 1-8.

VSmart: DSRC-based Smart Vehicle Testbed

VSmart is a DSRC-enabled smart vehicle testbed established at Cyber-Physical Intelligence Laboratory (CPS-Lab), McGill University, and is supported by NSERC and General Motors Company. VSmart explores and illustrates the potential to enhance driving safety and traffic efficiency with V2V communications (especially DSRC).

Selected Publications

- L. Kong, X. Chen, X. Liu, L. Rao. "FINE: Frequency-divided Instantaneous Neighbors Estimation System in Vehicular Networks". IEEE PerCom Concise Paper, St. Louis, Missouri, USA, 2015.

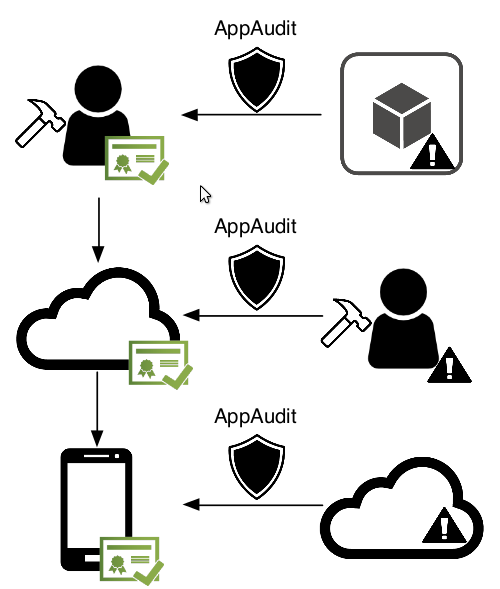

We design AppAudit, a program analysis framework that checks if an Android application leaks sensitive personal data. AppAudit is designed with minimalism, using least possible memory and least amount of time. Current prototype could vet a real app with 256MB memory in 5 seconds on average. AppAudit can be used for three use cases:

Selected publications:

- mobile app developers could use AppAudit to check if their apps include any data-leaking libraries or modules

- the app market could use AppAudit to vet newly uploaded apps and remove data-leaking ones

- mobile users could use AppAudit to avoid installing data-leaking apps

Selected publications:

- M. Xia, L. Gong, Y. Lv, Zh. Qi, X. Liu: Effective Real-time Android Application Auditing. The 36th IEEE Symposium on Security and Privacy (IEEE S&P'15)

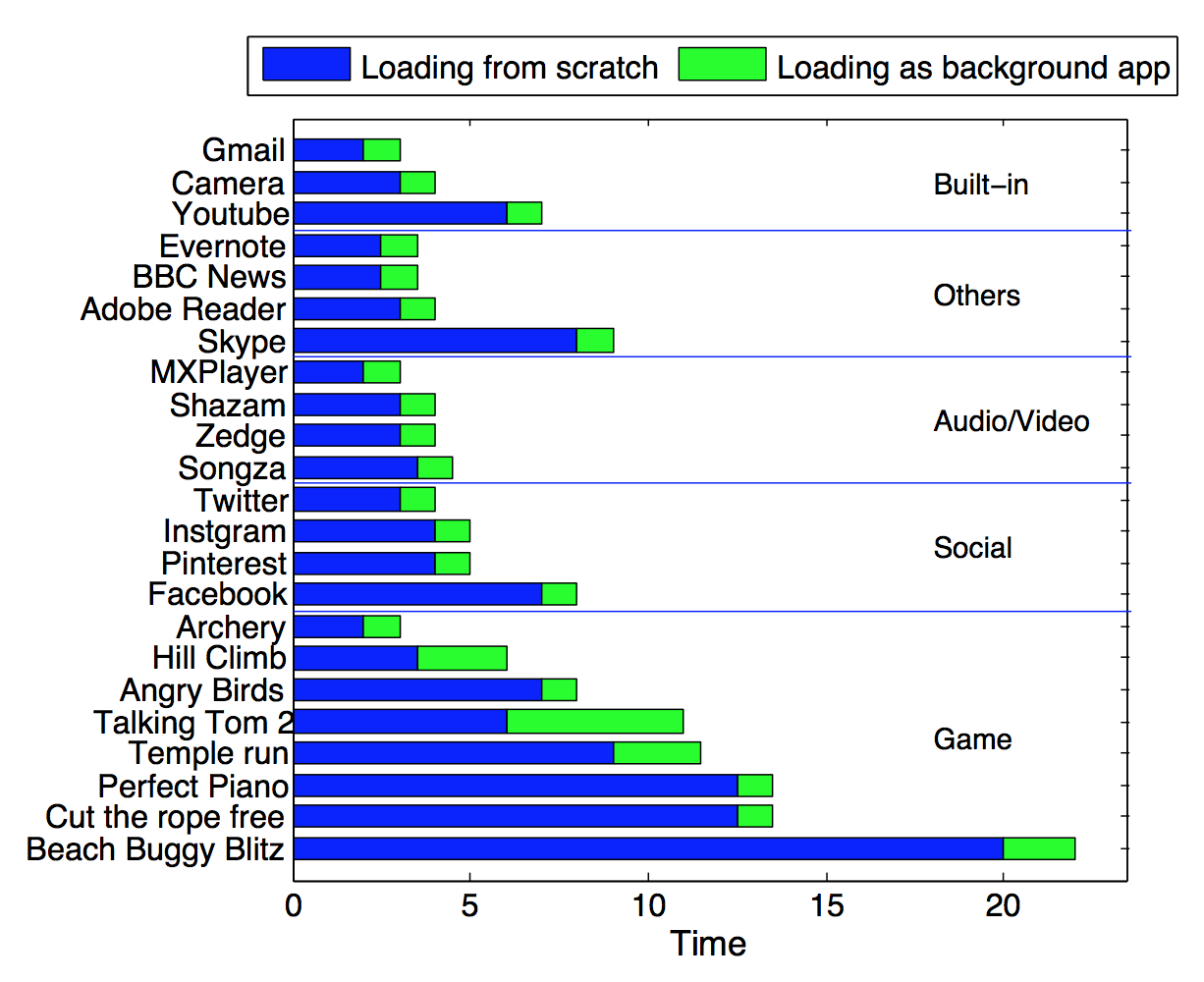

Mobile operating systems embrace new mechanisms

that reduce energy consumption for common usage

scenarios. The background app design is a repre

sentative implemented in all major mobile OSes.

The OS keeps apps that are not currently inter

acting with the user in memory to avoid repeated

app loading. This mechanism improves responsive

ness and reduces the energy consumption when the

user switches apps. However, we demonstrate that

application errors, in particular memory leaks that

cause system memory pressure, can easily cripple

this mechanism. In this paper, we conduct experi

ments on real Android smartphones to 1) evaluate

how the background app design improves respon

siveness and saves energy; 2) characterize memory

leaks in Android apps and outline its energy im

pact; 3) propose design improvements to retrofit the

mechanism against memory leaks.

Selected publications:

Selected publications:

- M. Xia, W. He, X. Liu, J. Liu: Why Application Errors Drain Battery Easily? A Study of Memory Leaks in Smartphone Apps. The 5th Workshop on Power-Aware Computing and Systems (HotPower'13)

Selected Publications

- L. Kong, L. He, X. Liu, Y. Gu, M. Wu, X. Liu. "Privacy-Preserving Compressive Sensing for Crowdsensing based Trajectory Recovery". IEEE ICDCS, Columbus, Ohio, USA, 2015.

mZig: Multi-Packet Reception in ZigBee

mZig is a novel physical layer design that enables a receiver to simultaneously decode multiple packets from different transmitters in ZigBee. As a low-power and low-cost wireless protocol, the promising ZigBee has been widely used in sensor networks, cyber-physical system, and smart buildings. Since ZigBee based networks usually adopt tree or cluster topology, the convergecast scenarios are common in which multiple transmitters need to send packets to one receiver. For example, in a smart home, all appliances report data to one control plane via ZigBee. However, concurrent transmissions lead to the severe collision problem. The conventional ZigBee avoids collisions using backoff time, which introduces additional time overhead. Advanced methods resolve collisions instead of avoidance, in which the state-of-the-art ZigZag resolves one m-packet collision requiring m retransmissions.

Selected publications:

Selected publications:

- L. Kong, X. Liu. "mZig: Enabling Multi-Packet Reception in ZigBee". ACM MobiCom, Paris, France, 2015.

Performance Optimization and Tuning of 802.11 Wifi

Selected publication:

- A. J. Pyles, X. Qi, G. Zhou, M. Keally, and X. Liu, "SAPSM: Smart Adaptive 802.11 PSM for Smartphones," in Proceedings of the 2012 ACM Conference on Ubiquitous Computing, New York, NY, USA, 2012, pp. 11–20. (Acceptance rate = 19.3%, 58 out of 301).

- X. Xing, J. Dang, S. Mishra, and X. Liu, "A highly scalable bandwidth estimation of commercial hotspot access points," in 2011 Proceedings IEEE INFOCOM, 2011, pp. 1143–1151.

- A. J. Pyles, Z. Ren, G. Zhou, and X. Liu, "SiFi: Exploiting VoIP Silence for WiFi Energy Savings Insmart Phones," in Proceedings of the 13th International Conference on Ubiquitous Computing, New York, NY, USA, 2011, pp. 325–334. (Acceptance rate = 16.6%, 50 out of 302).

- X. Xing, S. Mishra, and X. Liu, "ARBOR: Hang Together Rather Than Hang Separately in 802.11 WiFi Networks," in 2010 Proceedings IEEE INFOCOM, 2010, pp. 1–9. (Acceptance rate = 17.5%, 276 out of 1575).

Aerial - Device-free activity recognition

Aerial is a technology that senses and distinguishes who you are, where you are and what you are doing using Wi-Fi signals already present in your house. With just a single device, you can monitor your entire home. No need to buy a variety of expensive sensors and invasive cameras. Right out of the box, the aerial cube is packed with features to help you become more aware of what is happening in your home. As long as there are standard Wi-Fi signals in the air, aerial is good to go.

Cloud Computing

Selected publications:

- Y. Hua, B. Xiao, and X. Liu, "NEST: Locality-aware approximate query service for cloud computing," in 2013 Proceedings IEEE INFOCOM, 2013, pp. 1303–1311. (Acceptance rate=17%, 280 out of 1613).

- Y. Hua, X. Liu, and D. Feng, "Neptune: Efficient remote communication services for cloud backups," in 2014 Proceedings IEEE INFOCOM, 2014, pp. 844–852. (Acceptance rate=19.4%, 320 out of 1645).

- Y. Hua, X. Liu, and H. Jiang, "AN℡OPE: A Semantic-Aware Data Cube Scheme for Cloud Data Center Networks," IEEE Transactions on Computers, vol. 63, no. 9, pp. 2146–2159, Sep. 2014.

- Y. Hua, X. Liu, and D. Feng, "Data Similarity-Aware Computation Infrastructure for the Cloud," IEEE Transactions on Computers, vol. 63, no. 1, pp. 3–16, 2014.

Big Data Processing and Machine Learning Systems and Applications

Update-Efficient and Parallel-Friendly Content-based Indexing System

The sheer volume of contents generated by today's Internet services are stored in the cloud. The effective indexing method is important to provide the content to users on demand. The indexing method associating the user-generated metadata with the content is vulnerable to the inaccuracy caused by the low quality of the metadata. While the content-based indexing does not depend on the error-prone metadata, the state-of-the-art research focuses on developing descriptive features and miss the system-oriented considerations when incorporating these features into the practical cloud computing systems.

We propose an Update-Efficient and Parallel-Friendly content-based indexing system, called Partitioned Hash Forest (PHF). The PHF system incorporates the state-of-the-art content-based indexing models and multiple system-oriented optimizations. PHF contains an approximate content-based index and leverages the hierarchical memory system to support the high volume of updates. Additionally, the content-aware data partitioning and lock-free concurrency management module enable the parallel processing of the concurrent user requests. We evaluate PHF in terms of indexing accuracy and system efficiency by comparing it with the state-of-the-art content-based indexing algorithm and its variances. We achieve the significantly better accuracy with less resource consumption, around 37% faster in update processing and up to 2.5X throughput speedup in a multi-core platform comparing to other parallel-friendly designs.

We propose an Update-Efficient and Parallel-Friendly content-based indexing system, called Partitioned Hash Forest (PHF). The PHF system incorporates the state-of-the-art content-based indexing models and multiple system-oriented optimizations. PHF contains an approximate content-based index and leverages the hierarchical memory system to support the high volume of updates. Additionally, the content-aware data partitioning and lock-free concurrency management module enable the parallel processing of the concurrent user requests. We evaluate PHF in terms of indexing accuracy and system efficiency by comparing it with the state-of-the-art content-based indexing algorithm and its variances. We achieve the significantly better accuracy with less resource consumption, around 37% faster in update processing and up to 2.5X throughput speedup in a multi-core platform comparing to other parallel-friendly designs.

Distributed (Deep) Machine Learning Community (DMLC)

DMLC is a community of awesome distributed machine learning projects, including the well-known parallel gradient boost tree model XGBoost, and the deep learning system, MXNet, etc.

XGBoost is an optimized distributed gradient boosting library designed to be highly efficient, flexible and portable. It implements machine learning algorithms under the Gradient Boosting framework. It has been the winner solution of many Kaggle machine learning competitions.

Nan Zhu, a member of CPSLAB, is leading the efforts on the development of XGBoost jvm-packages and serves as the committee member of DMLC. The main goal of XGBoost jvm-packages is to achieve seamless integration between XGBoost and JVM-based parallel data processing systems like Apache Spark. With integration, users can enjoy both the convenient interfaces in systems like Spark and the high performance of XGBoost.

You can check the release blog.

XGBoost is an optimized distributed gradient boosting library designed to be highly efficient, flexible and portable. It implements machine learning algorithms under the Gradient Boosting framework. It has been the winner solution of many Kaggle machine learning competitions.

Nan Zhu, a member of CPSLAB, is leading the efforts on the development of XGBoost jvm-packages and serves as the committee member of DMLC. The main goal of XGBoost jvm-packages is to achieve seamless integration between XGBoost and JVM-based parallel data processing systems like Apache Spark. With integration, users can enjoy both the convenient interfaces in systems like Spark and the high performance of XGBoost.

You can check the release blog.

- N. Zhu, L. Rao, X. Liu, "PD2F: Running a Parameter Server within a Distributed Dataflow Framework," Workshop on Machine Learning Systems at Neural Information Processing Systems (NIPS), 2015.

- M. Xia, N. Zhu, S. Elnikety, X. Liu, and Y. He, "Performance Inconsistency in Large Scale Data Processing Clusters," presented at the Proceedings of the 10th International Conference on Autonomic Computing (ICAC 13), 2013, pp. 297–302.

Realtime Systems and Applications

- W. Dong, C. Chen, J. Bu, X. Liu, and Y. Liu, "D2: Anomaly Detection and Diagnosis in Networked Embedded Systems by Program Profiling and Symptom Mining," in 34th IEEE International Real-Time Systems Symposium (RTSS), 2013, pp. 202–211.

- W. Liu, M. Yuan, X. He, Z. Gu, and X. Liu, "Efficient SAT-Based Mapping and Scheduling of Homogeneous Synchronous Dataflow Graphs for Throughput Optimization," in 29th IEEE International Real-Time Systems Symposium, 2008, pp. 492–504.

- W. Liu, M. Yuan, X. He, Z. Gu, and X. Liu, "Efficient SAT-Based Mapping and Scheduling of Homogeneous Synchronous Dataflow Graphs for Throughput Optimization," in 29th IEEE International Real-Time Systems Symposium (RTSS), 2008, pp. 492–504.

- J. Heo, D. Henriksson, X. Liu, and T. Abdelzaher, "Integrating Adaptive Components: An Emerging Challenge in Performance-Adaptive Systems and a Server Farm Case-Study," in 28th IEEE International Real-Time Systems Symposium (RTSS), 2007, pp. 227–238.

- Q. Wang, X. Liu, J. Hou, and L. Sha, "GD-Aggregate: A WAN Virtual Topology Building Tool for Hard Real-Time and Embedded Applications," in 28th IEEE International Real-Time Systems Symposium (RTSS), 2007, pp. 379–388.

- X. Liu and T. Abdelzaher, "On Non-Utilization Bounds for Arbitrary Fixed Priority Policies," in 12th IEEE Real-Time and Embedded Technology and Applications Symposium (RTAS'06), 2006, pp. 167–178. (Best Paper Award Finalist).

- L. Sha, X. Liu, Y. Lu, and T. Abdelzaher, "Queueing model based network server performance control," in 23rd IEEE International Real-Time Systems Symposium (RTSS), 2002, pp. 81–90.

Sensor Networks

- L. Kong, Q. Xiang, X. Liu, X. Liu, X. Gao, G. Chen, M. Wu. "ICP: Instantaneous Clustering Protocol for Wireless Sensor Networks". Elsevier Computer Networks (COMNET),Vol. 101, pp. 144-157, 2016.

- L. Kong, M. Xia, X. Y. Liu, M. Y. Wu, and X. Liu, "Data loss and reconstruction in sensor networks," in 2013 Proceedings IEEE INFOCOM, 2013, pp. 1654–1662. (Acceptance rate=17%, 280 out of 1613).

- Y. Gao, W. Dong, C. Chen, J. Bu, G. Guan, X. Zhang, and X. Liu, "Pathfinder: Robust path reconstruction in large scale sensor networks with lossy links," in 2013 21st IEEE International Conference on Network Protocols (ICNP), 2013, pp. 1–10. (Acceptance rate=18%, 46 out of 251).

- W. Dong, Y. Liu, C. Wang, X. Liu, C. Chen, and J. Bu, "Link quality aware code dissemination in wireless sensor networks," in 2011 19th IEEE International Conference on Network Protocols, 2011, pp. 89–98. (Acceptance rate = 16.4%, 31 out of 189).

- X. Liu and T. Abdelzaher, "Nonutilization Bounds and Feasible Regions for Arbitrary Fixed-priority Policies," ACM Trans. Embed. Comput. Syst., vol. 10, no. 3, p. 31:1–31:25, May 2011.

- W. He, X. Liu, H. V. Nguyen, K. Nahrstedt, and T. Abdelzaher, "PDA: Privacy-Preserving Data Aggregation for Information Collection," ACM Trans. Sen. Netw., vol. 8, no. 1, p. 6:1–6:22, Aug. 2011.

Driving Safety Application

A large number of car accidents occur at intersections every year mainly due to drivers’ "illegal maneuver" or "unsafe behavior". To promote traffic safety, we present SafeCam, a smartphone-based system that jointly leverages vehicle dynamics and the real-time traffic control information (e.g., traffic signals) to detect and study driver dangerous behaviors at intersections.

In particular, SafeCam uses embedded sensors (i.e., inertial sensors) on the phone to generate soft hints tracking different driving conditions while at the same time adopts vision-based algorithms to recognize intersection-related critical driving events including unsafe turns, running stop signs and running red lights. In order to improve the system efficiency, we utilize adaptive color filtering under two lighting conditions (e.g., sunny and cloudy) and deploy the subsampling methods to make a trade off between the detection rate and the processing latency.

Selected publications:

Selected publications:

- L. Jiang, X. Chen, and W. He, "SafeCam: Analyzing intersection-related driver behaviors using multi-sensor smartphones," in 2016 IEEE International Conference on Pervasive Computing and Communications (PerCom), 2016, pp. 1–9.

A Study of Facebook Likes

SafeVChat: Safety and Security in Online Video Chat Systems

Selected publications:

ISC: Adult Account Detection on Twitter

Selected publications: